A study presented at the COLM 2024 conference highlights significant challenges faced by AI systems in solving abstract visual puzzles, suggesting ongoing limitations in reasoning capabilities.

At a recent gathering in Philadelphia for the Conference on Language Modeling (COLM) 2024, researchers from the USC Viterbi School of Engineering Information Sciences Institute (ISI) presented an intriguing study exploring the reasoning capabilities of artificial intelligence (AI) when faced with cognitive puzzles traditionally used to assess human intelligence. The study’s focal point was multi-modal large language models (MLLMs), which integrate text and image processing to potentially mirror human-like logic and reasoning.

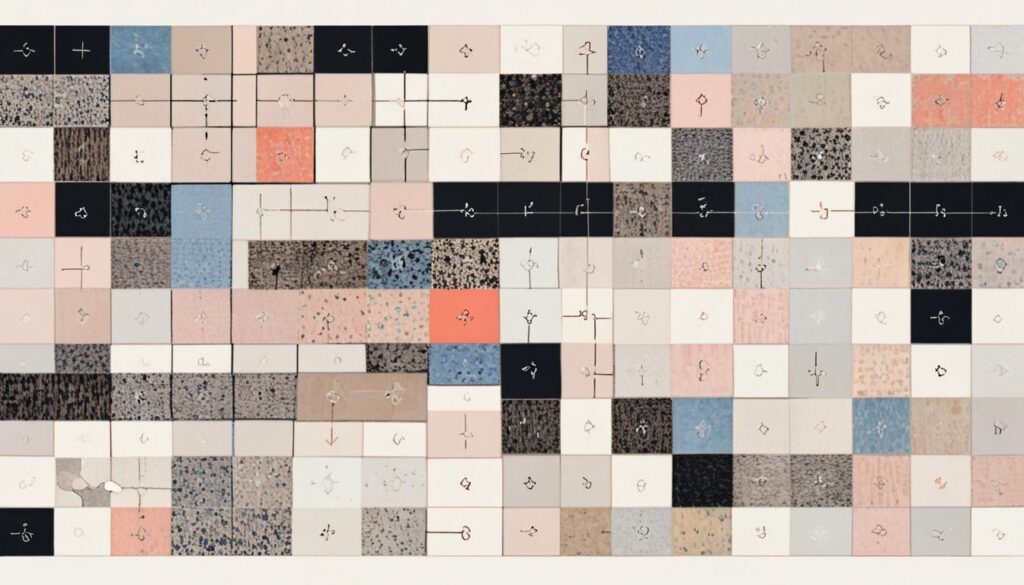

The central question of the research was whether these AI systems could solve abstract visual puzzles, akin to those found in intelligence quotient (IQ) tests for humans. The researchers concentrated on evaluating these models’ performance on Raven’s Progressive Matrices, a widely recognized tool for gauging abstract reasoning and visual analysis skills in humans. This puzzle sequence demands pattern recognition, logical reasoning, and visual perception, challenging AI to mimic human cognitive processes.

During the assessments, 24 different MLLMs were scrutinized, both open-source and closed-source. The results revealed significant disparities, particularly highlighting the struggles of open-source models with these sophisticated visual tasks. Kian Ahrabian, a research assistant on the project, described the models as notably inadequate in processing and interpreting patterns correctly. “They couldn’t get anything out of it,” he remarked, emphasizing the models’ inability to tackle the logic behind the visuals they were presented with.

Closed-source models such as GPT-4V exhibited a somewhat enhanced performance compared to their open-source counterparts. However, even these advanced models fell short of matching human cognitive abilities. Notably, the researchers discovered that employing a Chain of Thought prompting technique could improve model performance. This approach involves guiding the AI through the reasoning steps needed to solve the puzzles, illustrating a potential path to improving AI’s logical capabilities.

The disparity between open-source and closed-source AI models can be attributed partly to the resources available to developers. Closed-source variants benefit from proprietary advancements, extensive datasets, and significant computational power, factors often limited in open-source environments. Despite these advantages, models like GPT-4V, while somewhat proficient, remain far from achieving human-equivalent reasoning.

Jay Pujara, research associate professor and co-author of the study, highlighted the importance of understanding the limitations of current AI models. “This paper helps fill in a missing piece of the story of where AI struggles,” he stated. The findings underscore the ongoing challenges in developing AI models that possess comprehensive reasoning capabilities akin to those of humans.

The research at USC Viterbi is a part of a broader effort to identify and address the weaknesses in AI reasoning abilities, paving the way for future advancements. As such investigations progress, they are expected to refine AI systems to function in a manner that is more aligned with human logical processes. However, as it stands, AI remains reliant on these insights to gradually close the gap in cognitive reasoning.

Source: Noah Wire Services