Researchers at MIT’s CSAIL have unveiled a groundbreaking study on pareidolia and its implications for enhancing computer vision through a new machine learning model and dataset.

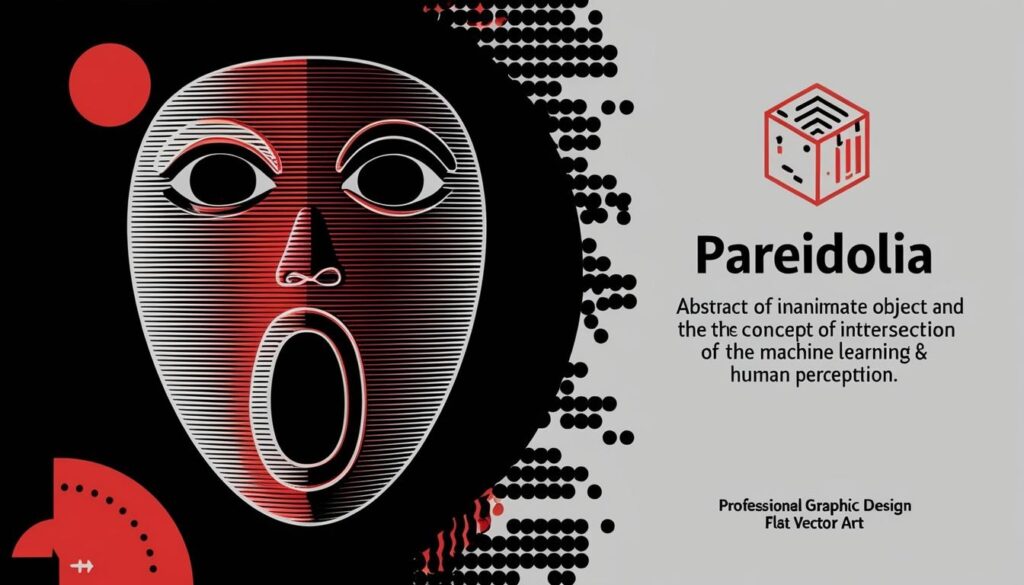

Researchers from the Massachusetts Institute of Technology’s Computer Science and Artificial Intelligence Laboratory (CSAIL) have advanced the study of pareidolia— the phenomenon where humans perceive faces in inanimate objects—through the development of a new machine learning model and dataset. Unveiled at the European Conference on Computer Vision (ECCV) in October, this research provides insights into why humans experience this phenomenon and suggests a pathway for enhancing computer vision capabilities.

Mark Hamilton, a PhD student in electrical engineering and computer science and the lead researcher of the study, stated, “What this paper really tries to do is explore the intersection between pareidolia and computer vision.” The researchers sought to understand how machine learning could replicate the way humans experience pareidolia by constructing the world’s first large-scale dataset specifically for this purpose.

To create this dataset, the team sifted through a massive collection of 5.85 billion images to curate a set of 5,000 pareidolic faces. Hamilton explained that the intention is for the dataset to be used to explore psychological concepts through computer models, allowing researchers to conduct experiments without the ethical and logistical issues commonly associated with human subjects. He compared this to the practice of developing rat models for testing new pharmaceuticals.

In testing the computational abilities of their model, the team conducted an experiment in which a state-of-the-art face detector struggled to identify pareidolic faces until additional training data featuring animal faces was incorporated. Hamilton theorised that this could indicate an evolutionary advantage of pareidolia; the ability to identify face-like patterns might have provided early humans with the crucial skill of recognising camouflaged threats in their environment, thereby enhancing survival.

The study also evaluated simpler machine learning models, like Gaussian models, revealing interesting characteristics about how pareidolic faces are represented. These basic models focused primarily on low-frequency features, representing the overall structure of faces while bypassing intricate details, a contrasting method to more complex neural networks that function as ‘black boxes.’ “You can write down a closed form equation, graph it out, and ask questions about how it behaves,” Hamilton explained regarding the advantages of simpler models.

Despite the promising findings, experts caution against directly equating the perceptual abilities of machines with those of humans. Greg Borenstein, a graduate from the MIT Media Lab’s Playful Systems Group, remarked, “The thing that a human being sees as a face is very different… a convolutional neural network detects very specific patterns of pixels rather than the concept of two eyes and a mouth.” While algorithms form patterns based on training data rather than evolutionary impulses, there are similarities in how humans and computers engage with images. For instance, in parallel tests with random noise images, both humans and the machine learning models displayed a “pareidolic peak,” identifying the most faces at intermediate levels of detail.

The implications of this research extend beyond cognitive science and into practical applications. One significant prospect involves enhancing facial recognition technology by reducing false positives or broadening the models to recognise a variety of faces, including non-human ones, like cartoons. Additionally, Hamilton noted that insights gained could feed into generative design, helping machines to create user-friendly devices that are less intimidating—critical in products like dental scanners for children.

The collaboration between researchers from disciplines of computer science, cognition, and product design signifies a multidimensional approach towards understanding both human perception and artificial intelligence. As innovations in AI and other emerging technologies continue to develop, the integration of such findings could lead to machines that perceive the world more similarly to humans, even recognising the shocked face of an electrical outlet.

Source: Noah Wire Services

- https://www.csail.mit.edu/news/ai-pareidolia-can-machines-spot-faces-inanimate-objects – This article discusses the MIT CSAIL study on pareidolia, including the creation of a large-scale dataset of pareidolic images and the findings on how machine learning models can be improved to detect pareidolic faces.

- https://www.csail.mit.edu/news/ai-pareidolia-can-machines-spot-faces-inanimate-objects – It explains Mark Hamilton’s statements on the intersection between pareidolia and computer vision, and the experimental results showing the improvement in machine detection of pareidolic faces after training on animal faces.

- https://arxiv.org/html/2409.16143v1 – This paper details the study on face pareidolia from a computer vision perspective, including the creation of the ‘Faces in Things’ dataset and the experiments conducted to understand how machine learning models detect pareidolic faces.

- https://arxiv.org/html/2409.16143v1 – It discusses the mathematical models proposed to capture the features of pareidolia and the ‘Goldilocks Zone’ where pareidolia is most likely to occur.

- https://www.csail.mit.edu/news/ai-pareidolia-can-machines-spot-faces-inanimate-objects – The article mentions the potential applications of the research, such as enhancing facial recognition technology and improving product design to make devices less intimidating.

- https://arxiv.org/html/2409.16143v1 – The paper explains how the researchers used a massive collection of images to curate the dataset of 5,000 pareidolic faces and how this dataset can be used for further research in computer vision and human perception.

- https://www.csail.mit.edu/news/ai-pareidolia-can-machines-spot-faces-inanimate-objects – It highlights the comparison made by Mark Hamilton between using this dataset and developing rat models for testing pharmaceuticals, avoiding ethical and logistical issues associated with human subjects.

- https://arxiv.org/html/2409.16143v1 – The paper discusses the simpler machine learning models, like Gaussian models, and their advantages in representing the overall structure of faces without intricate details.

- https://www.csail.mit.edu/news/ai-pareidolia-can-machines-spot-faces-inanimate-objects – It quotes Greg Borenstein on the differences between human and machine perception of faces, emphasizing that machines detect patterns of pixels rather than the concept of a face.

- https://arxiv.org/html/2409.16143v1 – The study mentions the ‘pareidolic peak’ observed in both human subjects and machine learning models when identifying faces in random noise images.

- https://www.csail.mit.edu/news/ai-pareidolia-can-machines-spot-faces-inanimate-objects – The article concludes with the multidimensional approach of the research, integrating insights from computer science, cognition, and product design to better understand human perception and artificial intelligence.