As generative AI becomes increasingly essential for businesses, AWS and NVIDIA introduce NIM inference microservices to address deployment challenges, enhancing performance, security, and scalability.

Generative AI Advancements: AWS and NVIDIA’s Answer to Inferencing Challenges

In the wake of the burgeoning popularity of large language model (LLM) chatbots like ChatGPT, businesses are encountering challenges in deploying generative artificial intelligence (AI) for innovative services. The current landscape reveals a fading advantage in utilising off-the-shelf self-hosted AI options. Organisations face difficulties in customising or refining generative AI models while simultaneously addressing low latency, high throughput, and robust security standards with existing self-hosted solutions.

A significant insight into the business utility of generative AI comes from Accenture, which highlights that companies adapting LLMs and generative AI are reportedly 2.6 times more likely to see a revenue uptick of 10% or more. However, not all generative AI ventures reach maturity. Research from Gartner projects that by 2025, around 30% of generative AI initiatives will be discontinued after the proof of concept stage due to factors like poor data quality, inadequate risk management, escalating expenses, or ambiguous business value.

One principal hurdle in implementing generative AI at scale is the formidable complexity involved. Modern businesses must deploy a range of AI models that can generate text, images, video, speech, and synthetic data. To successfully deploy these applications, continuous testing and maintenance of inference workloads on a considerable scale is required. Organisations generally have to decide between leveraging third-party managed solutions or utilising self-hosted platforms.

Third-party managed services typically offer simplicity and user-friendly API integration but might conflict with organisational data security policies due to their propensity to share data externally. On the other hand, self-hosted solutions permit improved control but demand substantial resources, as these models require ongoing fine-tuning, API customisation, and model updates.

The performance, consistent deployment, and security of generative AI models are pivotal to overcoming the various roadblocks. Developers are keen to compress and optimise models to operate effectively within existing infrastructure constraints, whilst also hoping to maintain a seamless user experience with low latency and high token throughput.

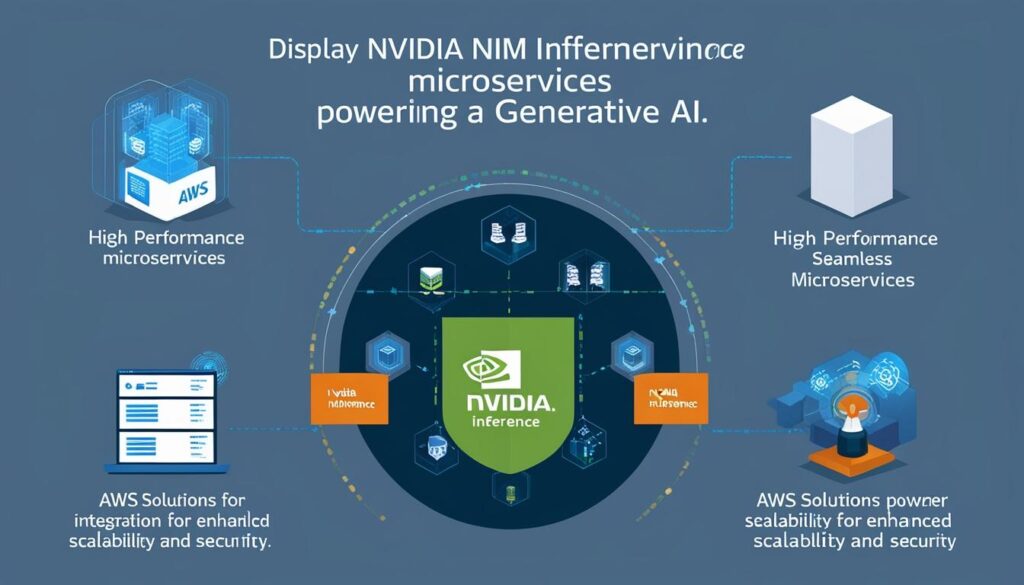

Recognising these complexities, NVIDIA has introduced NIM inference microservices, aimed at revolutionising generative AI model deployment. This suite of microservices offers a rapid, secure, and dependable way to deploy high-performance AI models. Notably, these services facilitate access to a wide array of industry-standard APIs and foundation models, such as Llama 3.1 and Mixtral, among others.

A key performance highlight of NVIDIA’s NIM microservices is the significant improvement in throughput and latency metrics. For instance, compared to leading open-source alternatives, the NVIDIA Llama 3.1 8B Instruct NIM has shown a 2.5-fold increase in throughput, a fourfold quicker time to the first token, and is 2.2 times faster in inter-token latency.

Moreover, NVIDIA NIM integrates seamlessly with Amazon Web Services (AWS) solutions like Amazon SageMaker and Amazon Elastic Kubernetes Service (Amazon EKS), allowing developers to operate within familiar environments. Hosting inference solutions on Amazon EKS through NIM, developers benefit from high-level security and control. Enterprise-grade software with dedicated features, rigorous validation, and continuous support from NVIDIA AI experts ensure robust deployment.

As various sectors brace for a generative AI-driven future, businesses are eager to explore the potential capabilities of NVIDIA NIM, available through AWS Marketplace, as a viable solution to enhance scalability, security, and cost-effectiveness in deploying generative AI models.

Source: Noah Wire Services